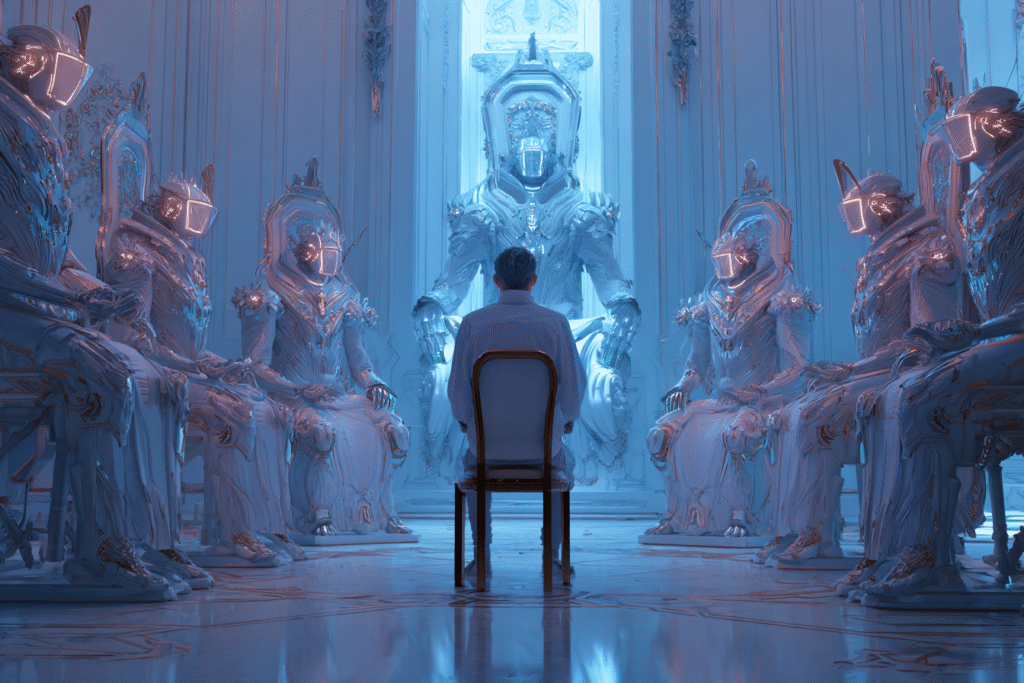

Today’s technology allows you to build a council of AI advisors that never sleeps, never forgets, and never judges.

Note: This article is for educational and informational purposes only. See full disclaimer at the end.

The sales pitch is seductive: build a council of AI advisors that can guide every decision, optimize every choice, and solve every problem with superhuman intelligence.

Today’s technology makes this entirely possible—you can deploy specialized AI agents for financial planning, career guidance, health optimization, and creative brainstorming, all available 24/7 with perfect recall and infinite patience.

But before you outsource your judgment to this digital council, consider what happens when we mistake algorithmic confidence for wisdom. The difference matters more than you might think.

The Seduction of Synthetic Wisdom

We’ve reached an inflection point where AI agents can convincingly simulate the language and patterns of wisdom. They quote philosophers, reference historical precedents, and weave together insights from thousands of sources with eloquent precision. Research from MIT shows that large language models augmented with specialized modules can now offer expert-level guidance that users often perceive as more trustworthy than human advisors [7]. Studies reveal that people consistently show “algorithm appreciation,” trusting AI recommendations over identical human advice even when informed of the AI’s imperfections [10].

This trust isn’t entirely misplaced. AI excels at pattern recognition, data synthesis, and consistent application of principles. When McKinsey reports that 78% of companies now use generative AI but see little bottom-line impact, they’re highlighting a crucial gap: we’re deploying AI widely but not wisely [11]. The tools are powerful, but our understanding of how to align them with human values and consciousness remains primitive.

The danger isn’t that AI will give us wrong answers—it’s that we’ll stop asking whether the answers align with what truly matters to us.

Wisdom Versus Intelligence

Here’s what the tech evangelists rarely mention: wisdom and intelligence operate in fundamentally different dimensions. Intelligence processes information, recognizes patterns, and optimizes solutions. Wisdom discerns meaning, weighs values, and understands context in ways that transcend computation [14].

Consider how AI approaches a career decision. It can analyze market trends, salary projections, skill gaps, and optimization paths with remarkable precision. What it cannot do is understand what fulfillment means to you personally, how a choice aligns with your deepest values, or recognize when the “optimal” path might betray your authentic self. As researchers note, wisdom involves “a deep understanding of the world and human nature, combined with a capacity for empathy, creativity, and moral judgment”—qualities that remain beyond current AI capabilities [14].

The Vatican’s recent note on AI and human intelligence emphasizes this crucial point: consciousness, moral intuition, and spiritual discernment remain uniquely human capacities [8]. When we delegate decisions to AI without maintaining this awareness, we risk what researchers call “algorithmic reductionism”—reducing complex human experiences to computational problems [9].

The Consciousness Requirement

Building an effective AI council isn’t primarily a technical challenge—it’s a consciousness challenge. Every interaction with AI agents requires you to maintain awareness of three critical dimensions:

First, recognize that AI operates within what philosopher Max Tegmark calls the “alignment problem.” The core challenge isn’t that AI becomes evil, but that it optimizes for goals that aren’t truly aligned with human values [12]. Your AI financial advisor might maximize returns while ignoring the ethical implications of investments. Your health optimization agent might push for metrics that compromise your quality of life.

Second, understand the transparency paradox. Research shows that while some AI transparency increases trust, too much information about algorithmic processes actually decreases it, creating cognitive overload [2]. This means you need to cultivate discernment about when to seek deeper understanding and when to trust your intuitive response to AI guidance.

Third, maintain what researchers call “selective trust”—the ability to recognize when AI advice serves you and when it doesn’t [4]. This requires ongoing calibration of your confidence in both AI capabilities and your own judgment, a dynamic balance that shifts with context and stakes.

The Values Alignment Challenge

When you build your AI council, you’re not just selecting tools—you’re encoding values into systems that will influence your thinking. The challenge is that AI agents learn from vast datasets that embed societal biases, corporate priorities, and optimization metrics that may not reflect your personal values [1].

Financial advisory AIs, for instance, show concerning patterns. Research reveals that users report higher satisfaction with AI advisors displaying extroverted personalities, even when those agents provide worse advice [1]. This highlights a troubling disconnect: our emotional responses to AI don’t correlate with the quality of guidance received.

The values alignment challenge extends beyond individual interactions. As AI agents become more sophisticated, they shape not just decisions but the frameworks through which we see the world. When every problem is framed through the lens of optimization and efficiency, we risk losing touch with values that can’t be quantified—beauty, meaning, connection, purpose.

The Two-Way Street of Influence

Here’s what most discussions about AI councils miss: the relationship is bidirectional. Yes, AI agents can extend your capabilities, but they also shape your thinking patterns. Regular interaction with AI advisors can lead to what researchers observe as changes in cognitive processing—we begin to think more like the systems we interact with [13].

This isn’t necessarily negative, but it requires conscious awareness. When you consistently rely on AI for decision-making, you might develop what could be called “algorithmic thinking”—prioritizing quantifiable metrics, seeking optimization over satisfaction, and viewing choices through computational frameworks. The risk isn’t just outsourcing decisions; it’s outsourcing the very process of developing judgment.

Studies on trust in algorithmic decision-making reveal that people who frequently use AI assistance show decreased confidence in their own judgment over time [5]. This creates a dependency cycle where decreased confidence leads to increased AI reliance, which further erodes independent decision-making capabilities.

Practical Consciousness in AI Council Building

So how do you build an AI council that amplifies rather than replaces your wisdom? The key lies in maintaining what researchers call “collaborative hybrid intelligence”—combining AI capabilities with human consciousness in ways that preserve and enhance both [13].

Start by being explicit about your values before engaging AI advisors. What matters most to you in this decision? What trade-offs are you unwilling to make? What aspects of the choice connect to your deeper purpose? These questions can’t be delegated to AI—they require the kind of self-knowledge that emerges from reflection and experience.

Use AI agents as thought partners, not decision-makers. Let them surface information, identify patterns, and suggest possibilities you might not have considered. But reserve the synthesis—the weaving together of facts with values, data with intuition, optimization with meaning—for your own consciousness.

Pay attention to your emotional responses to AI guidance. That sense of unease when an AI recommendation feels off? That’s your intuition signaling misalignment between algorithmic optimization and human values. Research on human-AI trust shows that the most effective collaborations occur when humans maintain skepticism and actively question AI recommendations [6].

The Discernment Imperative

As AI agents become more sophisticated, the ability to discern wisdom from intelligence becomes a survival skill. This isn’t about rejecting AI—it’s about engaging with it consciously. When your AI council presents options, ask yourself: Does this align with my values? Does this recommendation consider dimensions of life that can’t be quantified? Am I choosing this path because it’s optimal, or because it’s meaningful?

Remember that AI excels at answering “how” questions but struggles with “why” questions that require understanding purpose and meaning [3]. Your AI council can tell you how to maximize productivity, but not why productivity matters to you. It can optimize for success metrics but can’t define what success means in the context of a life well-lived.

The path forward isn’t to avoid building AI councils—these tools offer genuine value when used consciously. The path is to approach them with what Buddhist teacher Thich Nhat Hanh might call “wise attention”—fully aware of what these systems offer and what they cannot provide, maintaining sovereignty over the values and consciousness that make us human.

Your Council, Your Consciousness

Building your wisdom council in the age of AI requires a paradoxical stance: embrace the power of artificial intelligence while fiercely protecting your human consciousness. Use AI agents to amplify your capabilities, but never let them substitute for the deep knowing that comes from lived experience, moral intuition, and spiritual discernment.

The most effective AI council isn’t one that makes decisions for you—it’s one that helps you make better decisions while deepening your own wisdom. This requires constant calibration, conscious engagement, and the courage to sometimes choose the humanly meaningful over the algorithmically optimal.

As you build your digital advisors, remember: wisdom isn’t something you can download or delegate. It emerges from the marriage of knowledge and experience, intellect and intuition, information and consciousness. Your AI council can provide the first; only you can provide the second.

The question isn’t whether to build an AI wisdom council—it’s whether you’ll maintain the consciousness to know when to follow its guidance and when to trust the irreplaceable wisdom of your own human experience.

See you in the next insight.

Comprehensive Medical Disclaimer: The insights, frameworks, and recommendations shared in this article are for educational and informational purposes only. They represent a synthesis of research, technology applications, and personal optimization strategies, not medical advice. Individual health needs vary significantly, and what works for one person may not be appropriate for another. Always consult with qualified healthcare professionals before making any significant changes to your lifestyle, nutrition, exercise routine, supplement regimen, or medical treatments. This content does not replace professional medical diagnosis, treatment, or care. If you have specific health concerns or conditions, seek guidance from licensed healthcare practitioners familiar with your individual circumstances.

References

The references below are organized by study type. Peer-reviewed research provides the primary evidence base, while systematic reviews synthesize findings.

Peer-Reviewed / Academic Sources

- [1] arXiv. (2025). Are Generative AI Agents Effective Personalized Financial Advisors? Cornell University. https://arxiv.org/abs/2504.05862

- [2] National Center for Biotechnology Information. (2022). Artificial Intelligence Decision-Making Transparency and Employees’ Trust: The Parallel Multiple Mediating Effect of Effectiveness and Discomfort. PMC. https://pmc.ncbi.nlm.nih.gov/articles/PMC9138134/

- [3] National Center for Biotechnology Information. (2024). Beyond Artificial Intelligence (AI): Exploring Artificial Wisdom (AW). PMC. https://pmc.ncbi.nlm.nih.gov/articles/PMC7942180/

- [4] ACM Digital Library. (2024). Trust in AI-assisted Decision Making: Perspectives from Those Behind the System and Those for Whom the Decision is Made. CHI Conference on Human Factors in Computing Systems. https://dl.acm.org/doi/10.1145/3613904.3642018

- [5] Springer. (2025). Trust in artificial intelligence: a survey experiment to assess trust in algorithmic decision-making. AI & Society. https://link.springer.com/article/10.1007/s00146-025-02237-6

- [6] Frontiers. (2025). Trusting AI: does uncertainty visualization affect decision-making? Frontiers in Computer Science. https://www.frontiersin.org/journals/computer-science/articles/10.3389/fcomp.2025.1464348/full

Government / Institutional Sources

- [7] World Economic Forum. (2025). Could AI ever replace human wealth management advisors? https://www.weforum.org/stories/2025/03/ai-wealth-management-and-trust-could-machines-replace-human-advisors/

- [8] Vatican. (2025). Antiqua et nova. Note on the Relationship Between Artificial Intelligence and Human Intelligence. https://www.vatican.va/roman_curia/congregations/cfaith/documents/rc_ddf_doc_20250128_antiqua-et-nova_en.html

- [9] Zaytuna College. (2024). AI versus Human Consciousness: A Future with Machines as Our Masters? Renovatio. https://renovatio.zaytuna.edu/article/ai-versus-human-consciousness

- [10] HEC Paris. (2024). In AI We Trust? https://www.hec.edu/en/knowledge/articles/ai-we-trust

Industry / Technology Sources

- [11] McKinsey & Company. (2025). Seizing the agentic AI advantage. McKinsey. https://www.mckinsey.com/capabilities/quantumblack/our-insights/seizing-the-agentic-ai-advantage

- [12] Startup Hub. (2025). AI’s Alignment Imperative: A Race for Wisdom. https://www.startuphub.ai/ai-news/ai-video/2025/ais-alignment-imperative-a-race-for-wisdom/

- [13] Medium. (2024). AI and Wisdom. Best of times, worst of times. https://technoshaman.medium.com/ai-and-wisdom-ce0cd11db218

- [14] Logos. (2024). From Data to Discernment: Why AI Can’t Replace Cultivating of Wisdom. Logos Bible Software. https://www.logos.com/grow/why-ai-cant-replace-wisdom/